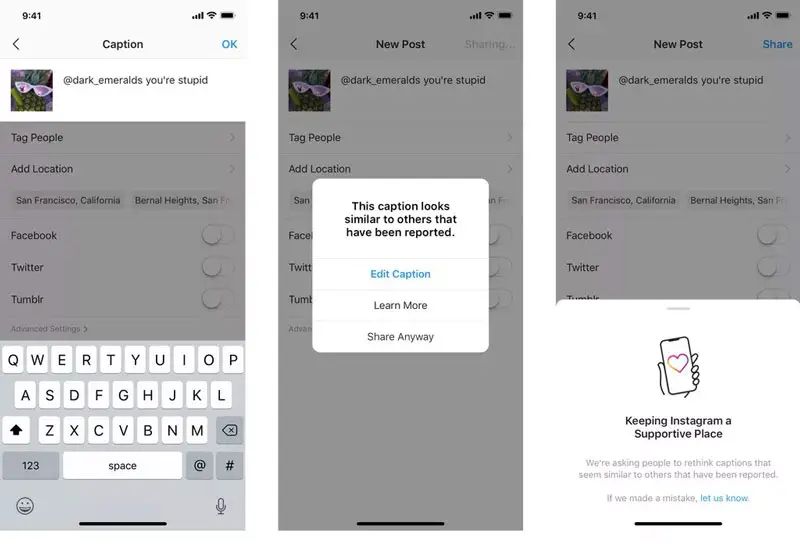

Instagram will now start to warn users before they post a “potentially offensive” caption, allowing them to reconsider posting.

Instagram has made the safety of all users on its platform a priority. This week, the company announced it is rolling out an AI-powered tool that analyzes captions in real-time, before they are published, and warns users that they may be offensive.

The implementation is simple, automatically generating a notification to let users know that their caption “looks similar to others that have been reported.”

Instagram will not punish you for posting “potentially offensive” captions. It will encourage you to reconsider, and edit the caption, however, it will also give you the option to post it as is.

The new feature is built on the same AI that the company introduced for comments back in July.

Instagram says the new feature is rolling out in “select countries” for now, but it will expand globally over the coming months.

[box]Read next: Instagram Starts Rolling Out Collaborative Group Stories[/box]