As part of its effort to rid its platform of misinformation, Facebook is expanding its penalties for users that repeatedly share misinformation.

In addition to bringing new ways to inform users when they interact with content rated by fact-checkers, Facebook will soon also be taking “stronger action” against those who repeatedly share misinformation.

Related | Facebook Wants You To Read An Article Before Sharing It

According to Facebook, this includes things like “false or misleading content about COVID-19 and vaccines, climate change, elections or other topics.”

In 2016, Facebook launched a fact-checking program to reduce viral misinformation, and over time it’s taken action against Pages, Groups, Instagram accounts, and domains that share misinformation on its platform.

The company is now expanding action to individual accounts as well, bringing stronger penalties against users that share content rated by fact-checkers. Penalties include reducing the distribution of all posts in News Feed from those users. Single posts that have been debunked by fact-checkers already have their distribution reduced.

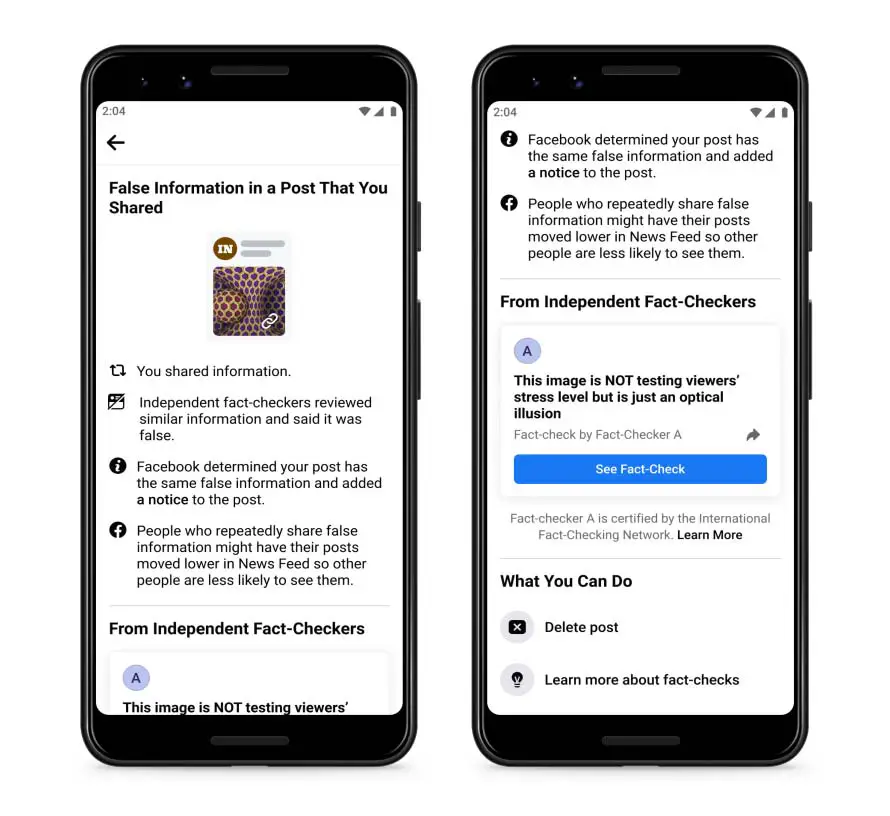

Facebook also already lets people know when content that they post is later rated by a fact-checker, with a new set of notifications that provide context when this occurs. The notifications include a link to articles that debunk any false claims. Users are then encouraged to share the article with their followers.

Finally, users will receive a notification that lets them know when they are repeatedly posting misinformation, and that their posts have been moved lower in News Feed.

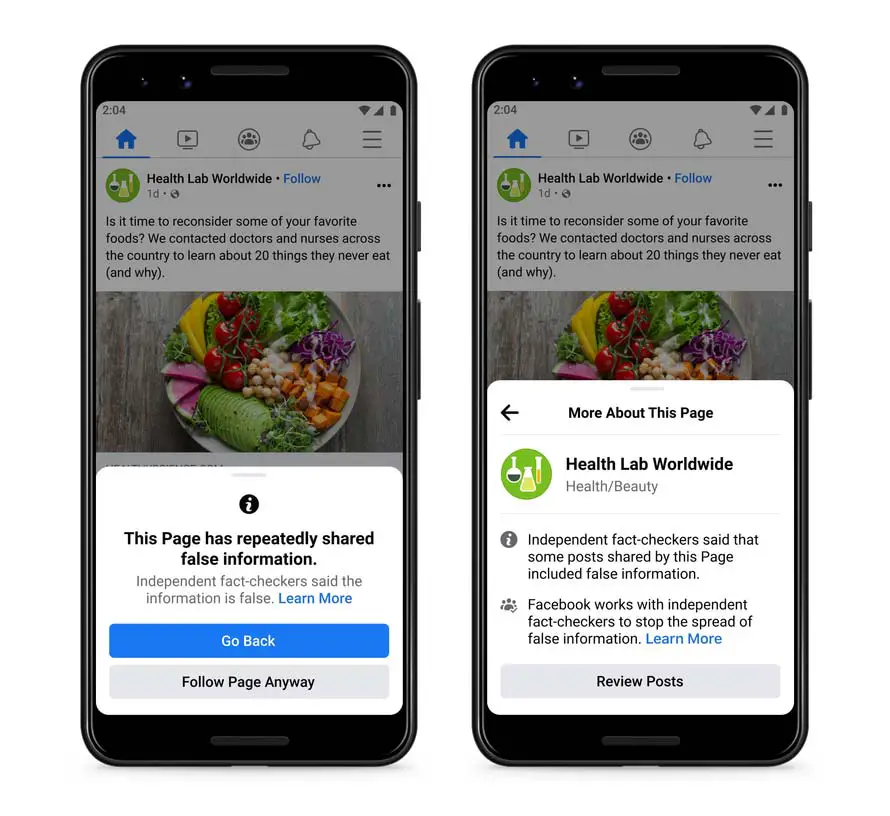

In terms of penalties for Pages, Facebook will now show users a popup reminding a visitor that the Page repeatedly shared misinformation. This, it hopes, will stop people from following such Pages.

In other recent news, Facebook is testing a new feature that encourages users to read an article before sharing it.