WhatsApp has always sold itself on privacy. Now Meta has to prove that promise can survive the AI layer.

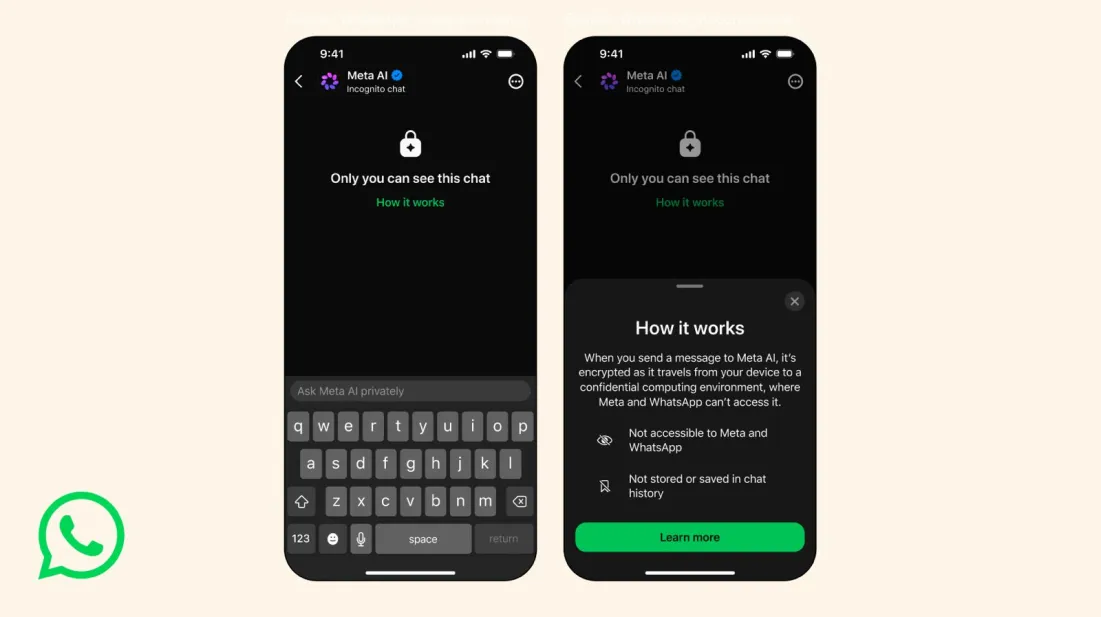

The company is adding an incognito mode for Meta AI chats inside WhatsApp, giving users a way to start private AI conversations that are not saved and disappear by default once the chat is closed. The feature, will roll out to WhatsApp and the standalone Meta AI app over the next few months.

Users will be able to start an incognito session from a new icon in one-on-one chats with Meta AI. If the app is closed or the phone is locked, the session ends, the messages disappear, and Meta AI loses the context of that conversation.

That is not just a privacy feature. It is a recognition of what AI chats are becoming.

When AI Becomes The Place For Private Questions

People do not only use chatbots to write captions, summarize documents, or plan trips. Increasingly, they use them for things they may not want attached to a permanent account history: health questions, money worries, relationship advice, workplace messages, emotional decisions, awkward replies.

That makes AI different from search in one important way. Search often captures intent. AI conversations can capture vulnerability.

For WhatsApp, that distinction matters. The app already sits inside some of the most intimate communication habits in the world. Bringing Meta AI into that environment creates obvious utility, but also an obvious trust problem.

An incognito mode is Meta’s attempt to answer that problem before it becomes a blocker.

Privacy Becomes Product Strategy

Meta has been laying the groundwork for this for a while. WhatsApp has already explained its private processing infrastructure, designed to support AI features without breaking the app’s end-to-end encryption promise. It has also started using that architecture for AI-powered message summaries.

The new incognito chats go further because they frame privacy not as a settings page, but as a mode of interaction.

That is important. Most users do not want to read a technical explanation before asking a sensitive question. They want a clear signal that says: this conversation is different.

In that sense, “incognito” is doing product work and brand work at the same time. It gives users a familiar mental model, borrowed from private browsing, and applies it to AI.

The Bigger Shift: AI Inside The Conversation Layer

The timing also matters because Meta is not stopping at one-on-one AI chats. WhatsApp is also working on Side Chat, a feature that would let users privately ask Meta AI questions inside existing chats without showing the AI interaction to other participants.

That could be a much bigger behavioral shift.

Right now, invoking AI in a group or chat context can feel exposed. If users can quietly ask AI for help interpreting, replying, translating, summarizing, or checking something inside a conversation, AI becomes less like a separate destination and more like an invisible assistant sitting beside the message thread.

That is where messaging apps become powerful AI surfaces. Not because people open them to “use AI,” but because AI appears exactly where uncertainty happens.

The Trust Problem Is Not Going Away

Meta is not alone here. ChatGPT and Claude already offer temporary or incognito-style chats, while companies like DuckDuckGo and Proton have been pushing privacy-first AI experiences.

The broader market is moving in the same direction for a reason. As AI becomes more personal, the question is no longer only whether the answer is useful. It is whether the user feels safe asking the question in the first place.

That question becomes even sharper as legal and workplace conversations around AI records become more serious. If chatbot conversations can become discoverable, stored, reviewed, or used out of context, private modes stop looking like a niche feature and start looking like basic infrastructure.

For brands, platforms, and product teams, the lesson is simple: AI trust cannot live only in policy language. It has to be visible in the interface.

WhatsApp’s incognito mode may sound like a small toggle. But it points to a much larger reality: the more personal AI becomes, the more privacy has to become part of the product itself.